When Lyrics Lead, Music Work Changes Shape

A large part of modern music creation no longer starts with instruments. It starts with phrases. A line appears in a note app. A chorus arrives while walking. A short emotional sentence sounds stronger than ordinary speech and begins asking for melody. Yet many lyric-first creators still struggle to move from words to something audible. They do not lack imagination. They lack a bridge. That is where an AI Music Generator becomes meaningful. It offers a way to test whether language can become a song before a full production process ever begins.

This matters because lyric writing and music production have traditionally moved at different speeds. Words can appear quickly, but songs require arrangement, performance, and technical follow-through. AI tools are beginning to narrow that gap. They allow words to drive the process earlier, which changes not only workflow but also who gets to participate. Someone with no studio confidence can still hear whether their lines carry musical life.

The Most Interesting Change Is Not Automation

The popular conversation around AI music often focuses on speed, novelty, or controversy. But the deeper shift is actually structural. For many users, AI is changing the order of creation. Instead of music leading and lyrics adapting later, lyrics can now arrive first and pull the rest of the process forward.

That reversal affects how creators think. A phrase is no longer just a draft waiting for someday. It can become something testable now. It can be sung, placed in a structure, framed by genre, and evaluated emotionally. Even if the first output is imperfect, the writer has crossed an important threshold: the words are no longer silent.

Hearing Lyrics Exposes Their Strength Faster

Silent text can hide weaknesses. A lyric may look moving on screen yet feel flat when sung. Another line may appear too simple on paper yet become memorable once placed in rhythm. Hearing the words changes the standard of judgment. That is a productive kind of pressure.

Writers Gain Feedback Before Full Production

Traditionally, lyric-first creators either waited for a collaborator or tried to imagine the music internally. AI tools introduce another option. They make it possible to hear a provisional song version early enough to revise the writing itself, not just the arrangement around it.

Why ToMusic Makes Sense For Lyric-Driven Users

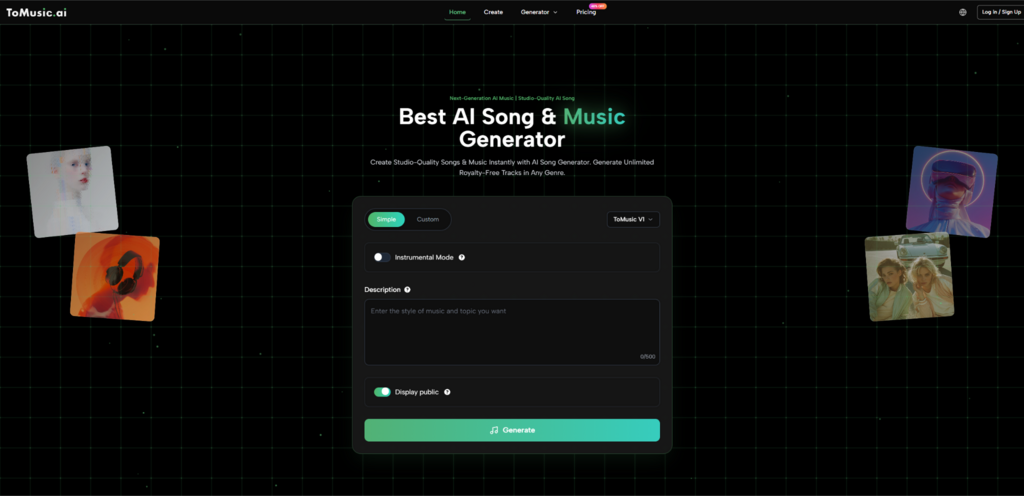

ToMusic is particularly relevant in this context because it visibly supports both text-based prompting and lyric-based creation. The platform’s structure suggests that it understands music creation as more than generic soundtrack generation. A user can select a model, choose between Simple and Custom modes, provide lyrics or descriptive prompts, decide on instrumental or song output, then generate.

For lyric-first creators, that structure matters. It does not force the writing process into the background. Instead, it leaves room for language to act as the main source material. That makes the tool feel less like a novelty box and more like a working bridge between words and sound.

Custom Mode Matters More Than It First Appears

A mode split is easy to overlook, but it changes the entire feel of a product. Simple mode works when the user wants to sketch with descriptive language. Custom mode becomes more important when the writer already has lines, sections, or a concept that deserves more deliberate treatment.

Model Choice Supports Different Song Intentions

Multiple models also matter for lyric users because not every song concept asks for the same output behavior. A quick test draft has different needs from a more polished emotional ballad or a longer compositional idea. Model selection gives the user a way to align the system’s starting point with the intent of the lyrics.

Six AI Music Websites Through A Lyric-First Lens

When evaluated from the perspective of lyric-driven creation, the current AI music landscape becomes easier to sort. The question is not simply which platform is popular. It is which one helps a writer move from language to usable sound in a way that fits the project.

1. ToMusic For Word-To-Song Transition

ToMusic is particularly strong for creators who want to move between descriptive music prompting and more direct lyric-based generation. That combination makes it suitable for people whose ideas begin in written form.

2. Suno For Immediate Song Interpretation

Suno is often a natural stop for creators who want quick full-song interpretation from prompts and text. It helps users hear complete musical takes without much setup.

3. Udio For Prompt-Led Song Construction

Udio belongs in the same core group of AI music sites that let users turn written direction into complete track outputs. It is useful when the priority is hearing a concept translated quickly.

4. SOUNDRAW For Utility And Creator Projects

SOUNDRAW is often better understood as a practical music tool for content creators, especially when the need is project-ready background music rather than lyric-centered songwriting.

5. Mubert For Contextual Soundtrack Creation

Mubert is relevant for creators who need mood-shaped music for content, campaigns, or platform-specific media. It is often less about song identity and more about fit-to-purpose audio.

6. AIVA For More Structured Compositional Exploration

AIVA becomes attractive when the user wants more style range and a composition-aware environment. For some writers, that broader musical framing can be useful when moving beyond the first concept stage.

Why ToMusic Feels More Direct For Lyric Users

A lyric-first workflow needs something very specific from software: it must respect words as primary input. Too many creative tools still behave as though language is a secondary instruction set attached to a music engine. ToMusic appears more useful because it places lyric handling in the visible core of the product rather than hiding it as an afterthought.

That changes the emotional quality of the workflow. The user is not merely decorating a beat with words. They are starting from words and asking the platform to build outward. For writers, this feels far more natural.

The Platform Lets Language Carry Structure

When lyrics can be entered directly, section thinking becomes more relevant. Verse, chorus, and transitions are no longer imagined abstractly. They become part of the prompt logic. This helps writers hear whether their own structure is actually working.

The Gap Between Notebook And Audio Gets Smaller

That is perhaps the biggest advantage. Many writers live with partial songs for months because the step from text to audio feels too large. A platform that shortens that leap changes not only productivity but motivation. Once a writer hears possibility, they are more likely to continue refining the piece.

The Actual Workflow Is Simpler Than Expected

The practical process on the platform stays grounded enough that lyric-first users can follow it without much setup.

Step 1. Choose The Model And Decide The Output Type

The user starts by choosing a model and deciding whether the result should lean instrumental or toward a fuller song format. This is the broad framing step.

Step 2. Choose Between Simple And Custom Modes

The next decision depends on how formed the idea already is. A loose concept can stay in Simple mode. A writer with prepared lyrics usually benefits more from Custom mode, where the system can respond to more direct guidance.

Step 3. Enter The Song Description Or Lyrics

This is where the product becomes most relevant for writers. Users can either describe the music they want or move into a Lyrics to Music AI workflow by supplying their own words. In practice, this turns the platform into a listening tool for drafts, not just a generation tool for finished ideas.

Step 4. Generate, Listen Back, And Rewrite If Needed

Generation is not the end of the writing process. It often reveals where the lyrics need revision. A line may be too long. A chorus may not resolve cleanly. A mood description may need sharpening. The output becomes a feedback mechanism for better writing.

A Comparison Table For Different Creative Priorities

| Platform | Primary Use Orientation | Strength For Writers | Best Fit |

|---|---|---|---|

| ToMusic | Prompt and lyric-based song creation | Strong bridge from written words to song drafts | Writers testing both mood and lyric structure |

| Suno | Rapid full-song generation | Fast interpretation of written ideas | Users wanting quick, audible concept checks |

| Udio | Prompt-led complete song creation | Direct path from text to track | Song idea prototyping |

| SOUNDRAW | Creator and royalty-focused utility | Less lyric-centered, more background oriented | Video and content production |

| Mubert | Contextual soundtrack generation | Useful for atmosphere rather than lyrical songs | Podcasts, ads, and visual media |

| AIVA | Broader composition assistance | Helpful for users wanting more structure and style depth | Writers moving toward fuller composition |

How Different People Use This In Reality

Lyric-first music creation is not a niche behavior anymore. It appears across several types of users, each needing something slightly different from AI tools.

Solo Songwriters With Incomplete Drafts

These users often have lines, hooks, and emotional direction but no fast way to hear them. AI tools help them decide whether the writing deserves deeper development.

Content Creators Who Need Memorable Originality

A custom lyric or slogan can become the basis for a distinctive audio identity. This is useful in short-form content, campaigns, and creator branding where generic stock tracks no longer feel enough.

Writers Who Are Not Traditional Musicians

This may be the most powerful group of all. They may never open a full production environment, but they still want to hear the shape of what they wrote. AI gives them access to musical testing without requiring them to first become technical producers.

The Limitations Are Part Of The Truth

A grounded evaluation is more useful than a celebratory one.

Words Alone Do Not Guarantee Strong Songs

A lyric may be honest, but honesty does not automatically create musical clarity. Structure, repetition, rhythm, and phrasing still matter. AI can reveal those issues, but it cannot make them disappear.

Iterations Are Often Necessary

The first generation may expose potential without fully capturing the intended emotional tone. That is normal. In many cases, the real creative value appears across multiple runs rather than in the first output.

Traditional Craft Still Matters

A strong tool can make the starting point easier, but it does not replace judgment. Writers still need to decide what sounds true, what feels forced, and what deserves revision. AI is not removing craft. It is relocating when and how craft gets exercised.

Why The Lyric-First Future Matters

Music creation is changing shape because language is becoming a more legitimate place to begin. That does not reduce the importance of production, performance, or musical skill. It simply means more creators can reach the first audible stage without being blocked by technical barriers.

From that perspective, ToMusic is valuable not merely because it generates songs. It is valuable because it helps words become testable sooner. For writers, that is not a minor convenience. It changes the emotional economy of creation itself. A lyric no longer has to wait in silence for permission to become music. It can step forward, be heard, and decide what it wants to become next.