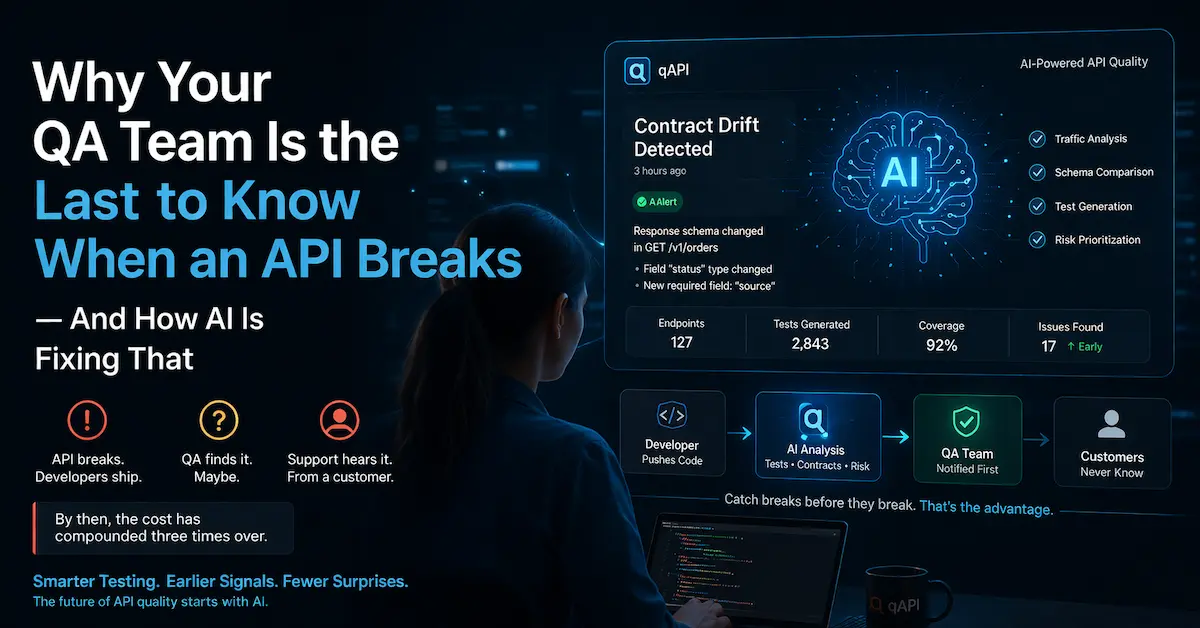

There’s a pattern most engineering teams know but nobody likes to say out loud. The API breaks. The developer who shipped it doesn’t notice. The QA team finds it — maybe. The support team definitely finds it, because a customer told them. By then, the cost of that break has compounded three times over.

This isn’t a people problem. It’s a process problem.

And in 2026 it’s becoming an infrastructure problem.

Because APIs are no longer simple request-response contracts between two services. They’re the connecting tissue between your product, your partners, your AI agents, and your customers. When that tissue tears, everything downstream feels it.

And most teams are still relying on the same testing practices they used when APIs were simpler and moved slower.

The question worth asking is: what would it actually look like if your QA team knew about an API problem before anyone else?

The way API testing usually works — and why it breaks down

Most QA teams inherit a testing setup that made sense at a smaller scale. There’s a Postman collection someone built two years ago. There are a few curl scripts in the CI pipeline. There might be a code-based framework if the team is disciplined. The tests run, they pass or fail, and someone triages the failures.

The problem isn’t that these tools are bad. The problem is that APIs change constantly, and the tests don’t keep up. A new field gets added to a response. An endpoint gets deprecated without a proper announcement.

A third-party dependency starts returning a subtly different payload. None of these things cause a dramatic failure — they cause a slow, quiet drift between what the API does and what the tests expect. By the time that drift shows up as a production incident, it’s been accumulating for weeks.

The second problem is coverage. The happy path is tested. The obvious error cases might be tested. But the boundaries — what happens at exactly the rate limit, what happens with a payload that’s technically valid but weird, what happens when two services call the same endpoint simultaneously — those gaps stay open because nobody has time to find and fill them all manually.

Writing exhaustive boundary tests for a single CRUD endpoint with six input fields can take an experienced QA engineer the better part of a day. When you have forty endpoints and a two-week sprint, something has to give. It’s always the edge cases.

The result is a test suite that looks comprehensive on a coverage dashboard and is quietly full of holes in practice. Engineers learn to trust it less over time. The red CI badge becomes background noise. And when something genuinely breaks in production, the post-mortem almost always uncovers an edge case that nobody had time to write a test for.

What AI actually changes about this

The honest version of this: AI doesn’t eliminate the need for API testing. What it does is change what humans have to do manually.

The tedious parts of API testing — generating test cases from a spec, identifying which fields need boundary testing, checking whether a new schema change broke existing contracts — are exactly the kind of pattern-matching work that AI handles well. The judgment calls — whether a test result actually indicates a real problem, whether a behavior change is intentional or a bug — still need a human in the loop.

Here’s what that looks like in practice:

Test generation from specs and traffic. Instead of manually writing test cases for every endpoint, an AI layer can ingest your OpenAPI spec and your real traffic patterns, then generate a starting set of tests that covers the cases a human would need hours to enumerate. Not a replacement for human judgment — a starting point that would have taken a sprint to build from scratch.

Contract drift detection. This is where AI earns its keep most visibly. When an API response changes — a field is renamed, a type shifts from string to integer, a new required field appears — a system watching your API behavior can flag that drift before it causes a downstream failure. Most teams find out about contract changes from a broken test or a broken client. They could find out from an alert that says “this endpoint’s response schema changed three hours ago.”

Smarter test prioritization. Not all tests are equally worth running on every commit. An AI layer that understands which endpoints changed, which tests cover those endpoints, and which tests have historically been flaky can tell your CI pipeline which tests actually matter for this particular change — instead of running everything and making engineers wait.

A real example: cutting testing time by 40%

One team we’ve worked with through qAPI had a familiar problem. They had a large API surface — dozens of endpoints across multiple services — and a QA team that was spending more time maintaining tests than writing them. Every sprint brought new endpoints, and the test suite lagged two or three sprints behind. Coverage was there on paper; in practice, the newest code was always the least tested.

After shifting to an AI-assisted testing setup, the change wasn’t dramatic on day one. What changed over six weeks was the maintenance burden. Instead of manually updating tests every time a response schema changed, the team got flagged automatically. Instead of guessing which tests to run for a given PR, the pipeline ran the relevant subset. The 40% reduction in testing time wasn’t a magic trick — it came from eliminating the overhead that had nothing to do with actually verifying behavior.

The QA team didn’t shrink. They smartly redirected. Less time on bookkeeping, more time on the cases that genuinely required human judgment: the security edge cases, the complex business logic assertions, the multi-service end-to-end flows that can’t be generated automatically because they require understanding how the system is supposed to behave, not just what it currently returns.

What this means for QA teams specifically

There’s an understandable anxiety in QA circles about AI-assisted testing. If the tool generates the tests, what’s left for the QA engineer to do?

The honest answer is: the hard parts. Generating 200 test cases for a CRUD endpoint is not the intellectually demanding part of QA work. Deciding which behaviors actually matter for your business, reading the failure signals and distinguishing a real problem from a flaky environment, designing the test scenarios that cover the cases nobody thought to spec — that’s where QA expertise is irreplaceable.

AI-assisted testing tools change the ratio. Less time on generation and maintenance. More time on judgment and coverage strategy. That’s a good trade for any team that has more endpoints than hours in the sprint.

The practical starting point

If your team wants to move in this direction without a big-bang migration, the entry point is usually contract testing. Start by capturing what your APIs actually return today — schemas, status codes, field types — and treating that as the baseline. Any deviation from that baseline is a signal worth investigating.

From there, the natural next step is automating the generation of negative test cases: missing required fields, invalid formats, boundary values. These are the cases that exist in every API but rarely get written because they’re tedious to enumerate manually.

Tools like qAPI are built around exactly this workflow — starting from your existing spec or traffic, generating the test surface you don’t have time to write by hand, and flagging the contract drift that would otherwise stay invisible until it causes a production issue. The goal isn’t to replace your QA process. It’s to make the parts that don’t require human judgment stop requiring human time.

The QA teams that figure this out first will spend the next few years catching problems earlier and shipping with more confidence. The ones that don’t will spend it triaging failures that a slightly smarter pipeline would have caught three sprints ago.