Artificial Intelligence, CPUs and Processors

Nvidia claims 10x cost savings with open-source inference models

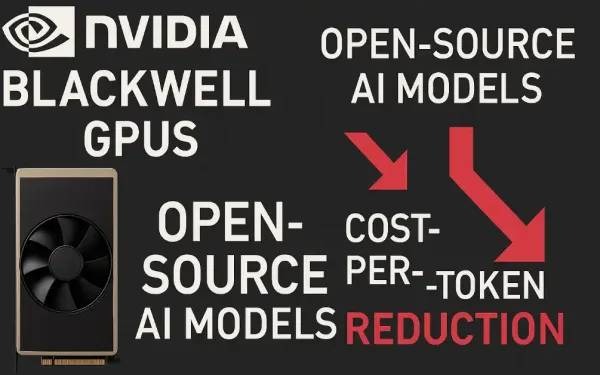

Nvidia has released analysis showing a 4X to 10X reduction in cost per token for AI inferencing by switching to open source models. The cost reductions were achieved by pairing Nvidia’s Blackwell GPU platform with open-source models from Baseten, DeepInfra, Fireworks AI, and Together AI. Their tests showed significant cost improvements across healthcare, gaming, agentic chat, and customer service. […

Intel teams with SoftBank to develop new memory type

Intel and SoftBank are collaborating to develop a new hybrid memory type combining phase-change memory and DRAM for AI data centers. It promises 2x faster access than DRAM, 40% latency…