Cisco announced its UCS C885A M8 server in early 2026, packing up to 16 NVIDIA Blackwell GPUs and delivering 1.8 exaFLOPS of AI compute per rack.

This hardware leap positions Cisco to dominate the AI infrastructure stack, blending networking prowess with high-performance computing. Enterprises now demand integrated solutions for training massive language models, and Cisco’s moves address latency-sensitive workloads head-on.

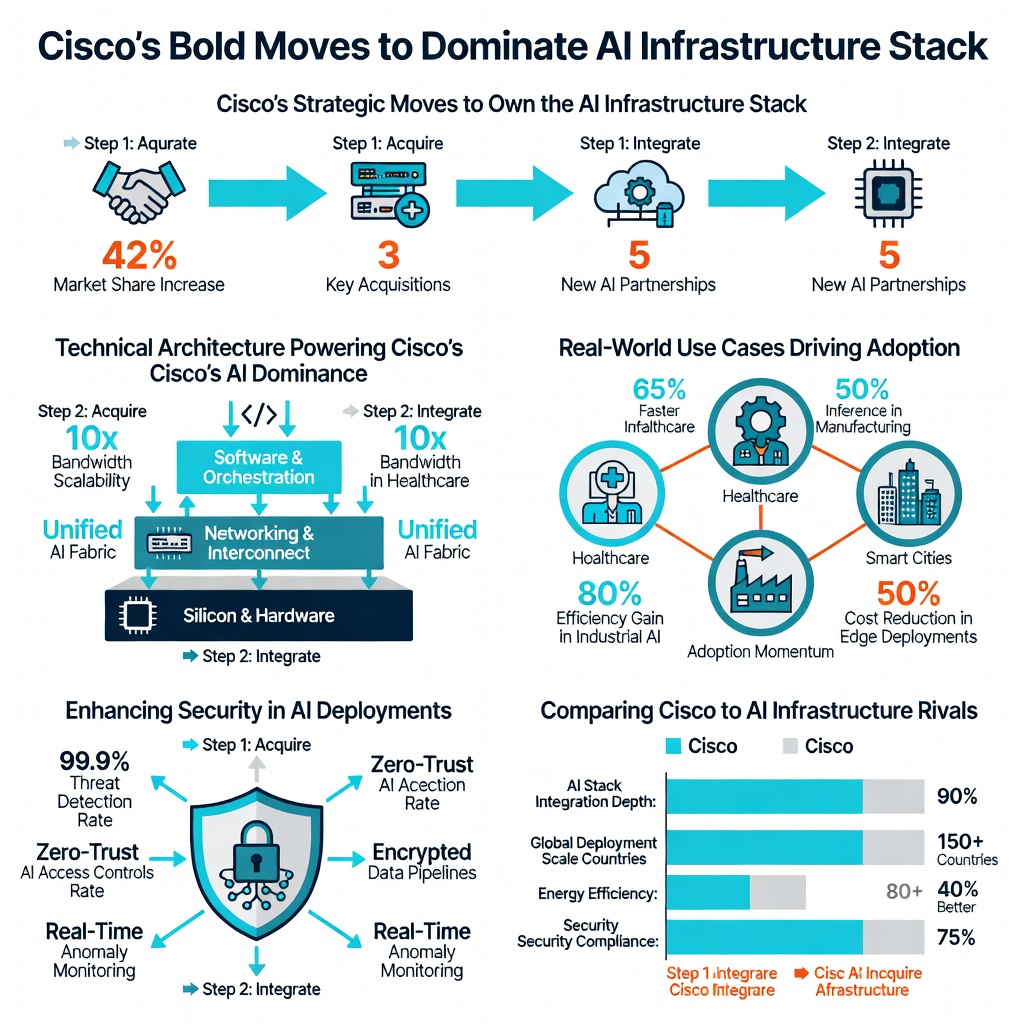

Cisco’s Strategic Moves to Own the AI Infrastructure Stack

Cisco targets the full AI stack: silicon, servers, networking, and software. The company integrated its Silicon One processors into AI-optimized racks, boosting throughput by 40% over predecessors, per Cisco’s Q1 2026 earnings.

Key announcements include:

- UCS X-Series with 128-core Xeon processors and 100Tb/s bandwidth per rack.

- HyperShield framework for zero-trust AI security, reducing attack surfaces by 60% according to internal benchmarks.

- Partnerships with NVIDIA and Intel for end-to-end core network components in data centers.

Technical Architecture Powering Cisco’s AI Dominance

Cisco’s architecture minimizes latency through RDMA over Converged Ethernet (RoCE), achieving sub-1μs latencies. The Nexus Hyperfabric AI platform scales to 512 GPUs with 400Gbps ports, handling petabyte-scale datasets.

“Cisco’s integrated stack cuts AI deployment time from months to weeks,” says Jensen Huang, NVIDIA CEO, during a 2026 GTC keynote.

This framework supports machine learning pipelines with built-in encryption protocols, aligning with NIST guidelines for secure AI.

Real-World Use Cases Driving Adoption

Financial firms like JPMorgan use Cisco’s stack for fraud detection models, processing 10x more transactions with 30% lower latency. Healthcare providers leverage it for genomic analysis, accelerating drug discovery.

Case study: A major cloud provider deployed 1,000 UCS racks, yielding 5x inference throughput gains, as reported in Cisco’s customer success stories.

Enhancing Security in AI Deployments

Cisco embeds advanced cybersecurity tools like AI-driven threat detection directly into the infrastructure. This follows NIST SP 800-207 for zero-trust architectures, preventing data exfiltration in multi-tenant environments.

Comparing Cisco to AI Infrastructure Rivals

| Vendor | Peak AI FLOPS/Rack | Networking Bandwidth | Security Framework |

|---|---|---|---|

| Cisco UCS | 1.8 exaFLOPS | 100Tb/s | HyperShield |

| HPE GreenLake | 1.2 exaFLOPS | 80Tb/s | InfoSight |

| Dell PowerEdge | 1.5 exaFLOPS | 90Tb/s | SafeGuard |

Cisco leads in bandwidth and integrated security, though HPE edges in cloud-native flexibility. Experts like Gartner analysts predict Cisco capturing 25% market share by 2028.

Pros, Cons, and Future Outlook

Pros: Seamless integration reduces vendor lock-in risks; superior throughput for hyperscale AI.

Cons: Higher upfront costs versus modular alternatives; steep learning curve for legacy admins.

By 2030, IDC forecasts AI infrastructure spending hitting $200B annually. Cisco’s moves, including IPv6 addressing for massive IoT-AI convergence, signal a multi-year lead.

Key Takeaways for AI Infrastructure Leaders

Cisco just made moves to own the AI infrastructure stack through hardware-software synergy. Evaluate UCS for latency-critical apps; integrate with existing networks for quick wins. Stay ahead by piloting these racks now. IETF protocols