The industry is hitting what many creative directors call the “prompt wall.” Over the last year, performance marketing teams have flooded their workflows with generative AI, hoping to bypass the traditional bottlenecks of asset production. Yet, after the initial novelty fades, a hard reality sets in: raw generative output often lacks the precision required for high-conversion ad units. When a model produces a stunning visual but places the subject in a way that obscures the primary headline or introduces a sixth finger on a hand reaching for a product, the “efficiency” of AI evaporates into a cycle of frustrated re-prompting.

For performance marketers iterating at scale, the real ROI of generative technology is shifting. It is no longer about the “lottery” of text-to-image generation; it is about the systematic application of surgical editing. Moving from a generation-heavy model to an editing-centric workflow reduces cost-per-creative and significantly increases speed-to-market by salvaging “near-miss” assets rather than discarding them.

The Production Wall: Why Prompting Alone Fails Performance Teams

The unpredictability of text-to-image models creates a friction point that can stall even the most aggressive campaign launches. When a creative lead requests a series of assets for a new social push, they aren’t just looking for “a cool image.” They require specific aspect ratios, clear focal points, and—crucially—negative space for copy overlays. Most base models are trained on artistic composition rather than the rigid, functional requirements of a Facebook carousel or a landing page hero section.

This disconnect leads to a drain on departmental resources. A designer might spend forty minutes trying to “prompt engineer” a background that doesn’t clash with a CTA button, only to receive 40 iterations that are all slightly off. This is a classic case of using the wrong tool for the final mile. Text-to-image is excellent for ideation and “sketching” the broad strokes of a campaign, but it is a blunt instrument for the final production phase.

Furthermore, the “start over” mentality—where a user discards a 90% perfect image because of one small defect—is an operational failure. In a high-velocity environment, the ability to fix a single element of an image is far more valuable than the ability to generate a thousand new versions of it. This is where the transition to a specialized AI Image Editor becomes a commercial necessity rather than a luxury.

The Logic of Salvage: Precision Refinement Over Total Generation

The most efficient creative teams have begun treating initial AI generations as raw clay rather than finished statues. The goal is to get “close enough” with a prompt and then move immediately into a refinement phase. This shift in mindset acknowledges that AI is currently better at “filling in the gaps” than it is at getting a complex scene perfect on the first try, much like the supercar precision needed in high-performance engineering.

Using an AI Image Editor allows a designer to correct lighting inconsistencies, remove distracting background elements, or fix minor anatomical errors without rerunning the entire prompt. If a model generates a perfect interior shot for a home-goods brand but places an ugly, nonsensical lamp in the corner, the old workflow would demand a new prompt. The new workflow simply uses an object eraser or an in-painting tool to replace the lamp with a neutral plant or a window.

This “surgical” approach is vital for maintaining brand consistency. If you are running an ad set across five different demographics, you need the product—the “hero”—to remain identical while the environment or the persona using it changes. Total generation makes this consistency nearly impossible. Refinement tools, however, allow you to lock in the product and modify the surrounding context with surgical precision.

Building the Variation Engine: Backgrounds and Object Logic

The core of performance marketing is A/B testing. We know that a blue background might convert 12% better in the Midwest, while a warm sunset tone performs better on the West Coast. Traditionally, this required multiple photoshoots or hours of manual masking in Photoshop, similar to testing rugged off-road capability across diverse terrains.

Modern AI tools have turned this into a “variation engine.” By leveraging background removal and replacement, teams can take a single high-quality product shot and place it in a dozen different environments in minutes. This isn’t just about speed; it’s about demographic relevance. You can test a skincare product in a minimalist bathroom, a sun-drenched spa, or a clinical laboratory setting, all using the exact same base asset to ensure the product itself remains the constant in your data.

Object erasure and manipulation also play a massive role in cleaning up stock photos. Many performance teams rely on stock libraries to keep costs down, but stock photos often feel “stocky” or contain clutter that distracts from the call to action. An AI-driven editor can strip away those distractions, leaving a clean, high-impact visual that looks like a custom-commissioned shoot.

Finally, there is the issue of technical integrity. An asset that looks great on a 5-inch smartphone screen may fall apart when used as a desktop hero image or a physical display. Standardizing an “upscale” phase in the workflow ensures that every asset, regardless of its origin, maintains crisp edges and professional textures across all placements.

Navigating Technical Debt: What AI Cannot Fix Safely

It is important to reset expectations regarding the current state of the technology. While AI has made astronomical leaps, there are “no-go zones” where human intervention is still the only reliable path.

The most prominent limitation is integrated typography. While models like Flux have made progress in rendering text, they still struggle with specific brand fonts, kerning, and the nuance of hierarchy. For any asset requiring heavy copy, the “AI text” should be treated as a placeholder at best. A human designer still needs to handle the final typography in a traditional design suite to ensure legibility and brand alignment. Relying on AI for final ad copy within the image is a recipe for “uncanny valley” layouts that diminish brand trust.

Another area of uncertainty lies in complex physical interactions. If your ad requires a person to hold a specific, branded product with a unique shape, AI frequently fails to understand the physics of the grip or the way light reflects off the product’s specific material. In these cases, it is often better to use a real photo of the hand and product, and then use an AI Photo Editor to change the person’s outfit, the background, or the lighting. Attempting to generate a “hand holding a specific SKU” from scratch usually results in a visual mess that requires more time to fix than it would have taken to just snap a quick reference photo.

The Efficiency Equation: Integrating an AI Photo Editor into the Stack

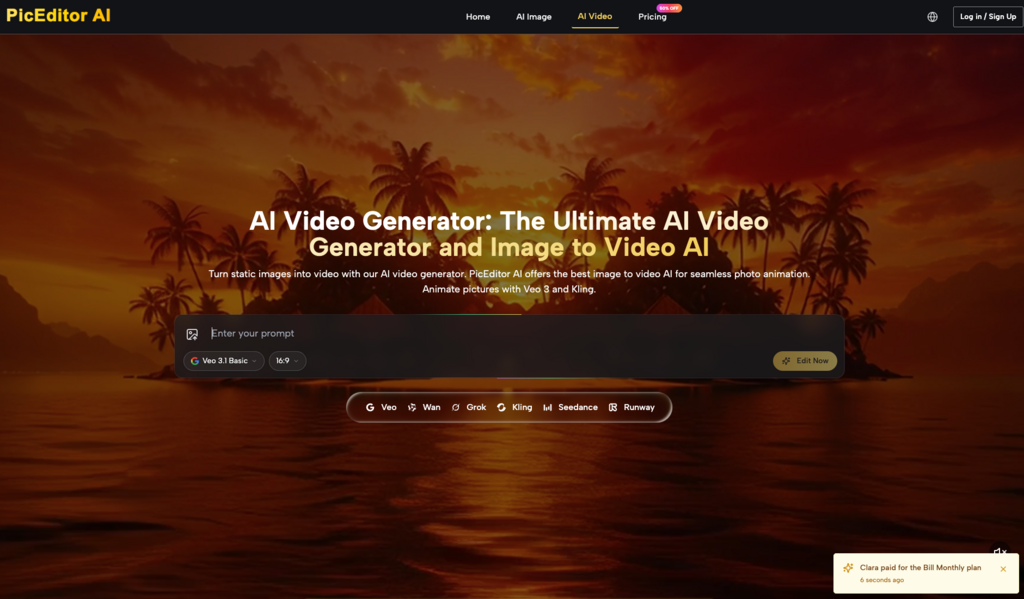

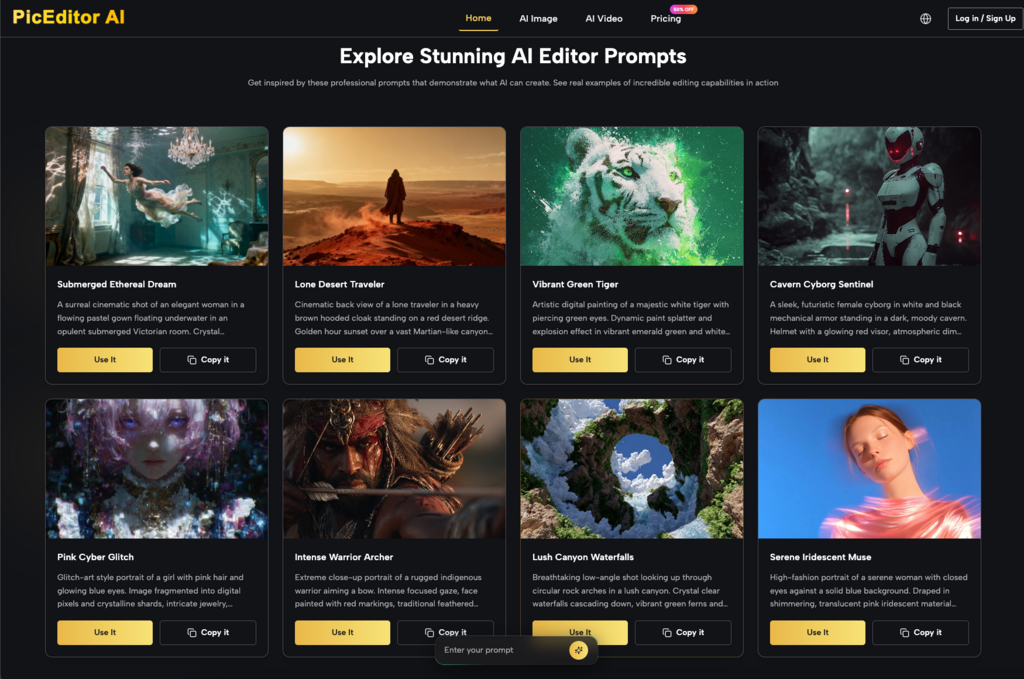

When evaluating which tools to bring into a production pipeline, the focus should be on model variety and flexibility. A tool like PicEditor AI is useful precisely because it doesn’t lock the user into a single look. By offering multiple models—ranging from the high-speed Nano to the high-detail Flux—it allows creative teams to choose the “engine” that matches the aesthetic of the specific client or vertical.

Integrating an AI Photo Editor into a daily sprint cycle changes the math of asset production. In a traditional workflow, a concept-to-live-ad cycle might take three to five days, accounting for revisions and masking. With a refined AI-augmented pipeline, that cycle can drop to under sixty minutes.

The cost-per-asset reduction is the final piece of the puzzle. When you reduce the need for expensive reshoots and minimize the hours spent on tedious manual retouching, the margin for every campaign increases. Performance marketing is a game of margins; by moving from a model of “creation” to a model of “curation and surgical editing,” teams can produce higher-quality assets at a volume that was previously impossible.

Ultimately, the winner in the current creative landscape won’t be the person who writes the best prompts. It will be the team that builds the best system for refining, upscaling, and adapting AI outputs into production-ready collateral. Precision always beats volume in the world of conversion, and surgical AI editing is the only way to achieve that precision at scale.