In today’s digital world, terms like bandwidth and throughput are frequently used in networking, but they’re often misunderstood or used interchangeably. Whether you’re streaming a 4K video, managing a cloud-based application, or setting up a local network, understanding the distinction between bandwidth and throughput is crucial for optimizing performance. This article dives deep into their definitions, differences, real-world applications, and factors affecting network efficiency, with practical examples and tools to measure them.

Bandwidth

In computer networks, it is the amount of data that can be carried from one point to another in a given period (generally a second). Network bandwidth is usually expressed in bits per second (bps), kilobits per second (kb/s), megabits per second (Mb/s), or gigabits per second (Gb/s). It is sometimes thought of as the speed at which bits travel. However, this is not accurate.

For example, in both 100Mb/s and 1000Mb/s Ethernet, the bits are sent at the speed of electricity. The difference is the number of bits that are transmitted per second.

- A combination of factors determines the practical bandwidth of a network.

- The properties of the physical media.

- The technologies are chosen for signaling and detecting network signals.

- Physical media properties, current technologies, and the laws of physics play a role in determining the available bandwidth.

Bandwidth connections can be symmetrical, meaning the data capacity is the same in both directions for uploading or downloading data, or asymmetrical, which means the download and upload capacities are unequal. In asymmetrical connections, the upload capacity is typically smaller than the download capacity.

Modern networks support the transfer of vast numbers of bits per second. Instead of quoting speeds of 10,000 or 100,000 bps, networks commonly express per-second performance in terms like:

- 1Kbps = 1,000 bits per second

- 1Mbps = 1,000 Kbps

- 1Gbps = 1,000 Mbps

So, a network with a performance rate of units in Mbps is much faster than one rated in units of Kbps but slower than the network performance of Gbps.

Factors Influencing Bandwidth:

Physical infrastructure (fiber-optic vs. copper cables).

Network hardware (routers, modems).

ISP-imposed limits.

Importance: Higher bandwidth supports more devices and data-intensive activities (e.g., 4K streaming, large downloads).

Examples of Performance Measurements

The standard examples are the following:

56 kbit/s Modem / Dialup

1.5 Mbit/s ADSL Lite

1.544 Mbit/s T1/DS1

10 Mbit/s Ethernet

11 Mbit/s Wireless 802.11b

44.736 Mbit/s T3/DS3

54 Mbit/s Wireless 802.11g

100 Mbit/s Fast Ethernet

155 Mbit/s OC3

600 Mbit/s Wireless 802.11n

622 Mbit/s OC12

1 Gbit/s Gigabit Ethernet

2.5 Gbit/s OC48

9.6 Gbit/s OC192

10 Gbit/s 10 Gigabit Ethernet

100 Gbit/s 100 Gigabit Ethernet

Bits and Bytes

Storage capacity, such as that of hard disks and USBs, is usually measured in units of kilobytes, megabytes, and gigabytes. K represents a multiplier of 1,024 capacity units in this type of usage. The following table defines the mathematics behind these terms:

Bandwidth in Modern Networks

In 2025, bandwidth demands are higher than ever due to technologies like 5G, Internet of Things (IoT), and artificial intelligence (AI)-driven applications. For instance:

- 5G Networks: Offer bandwidth up to 10 Gbps, enabling ultra-low-latency applications like autonomous vehicles.

- Cloud Computing: Services like AWS or Azure require high bandwidth to handle massive data transfers for AI model training.

- Streaming: 4K video streaming on Netflix or YouTube consumes ~25 Mbps of bandwidth.

It is determined by the physical infrastructure (e.g., fiber optic cables, Ethernet) and the service provider’s plan. Devices like routers and modems also play a role in supporting the allocated bandwidth.

Throughput

The measurement of the transfer of a bit across the media over a given period is called throughput. It measures how many information units a system can process in a given time. Due to some factors, it generally does not match the specified bandwidth in physical layer implementations. Many factors manipulate it, including the following:

- The type of traffic

- The amount of traffic

- The latency is created by the number of network devices between the source and destination

- Error rate

Latency is the time, including delays, it takes for data to travel from one point to another. In networks with multiple segments, throughput can’t be faster than the slowest link in the path from source to destination. Even if all or most segments have high bandwidth, it will only take one segment in the path with low throughput to create a tailback to the throughput of the entire network.

The average transfer speed over a medium is often described as throughput. This measurement includes all protocol overhead information, such as packet headers and other data, during the transfer process. It also contains packets that are retransmitted due to network conflicts or errors.

Another measurement evaluates the transfer of usable data as sound output. Goodput measures usable data transferred over a given period. It is throughput minus traffic overhead for establishing sessions, acknowledgments, and encapsulation. Goodput only measures the original data.

Throughput in Real-World Scenarios

Throughput varies based on network conditions and use cases:

- Video Conferencing: A Zoom call may require 3–5 Mbps of throughput for HD quality, but packet loss can degrade performance.

- File Transfers: Downloading a large file from a cloud server depends on both server bandwidth and network throughput.

- Gaming: Online games like Fortnite need consistent throughput (~1–3 Mbps) to avoid lag, prioritizing low latency over raw speed.

Key Differences Between Bandwidth and Throughput

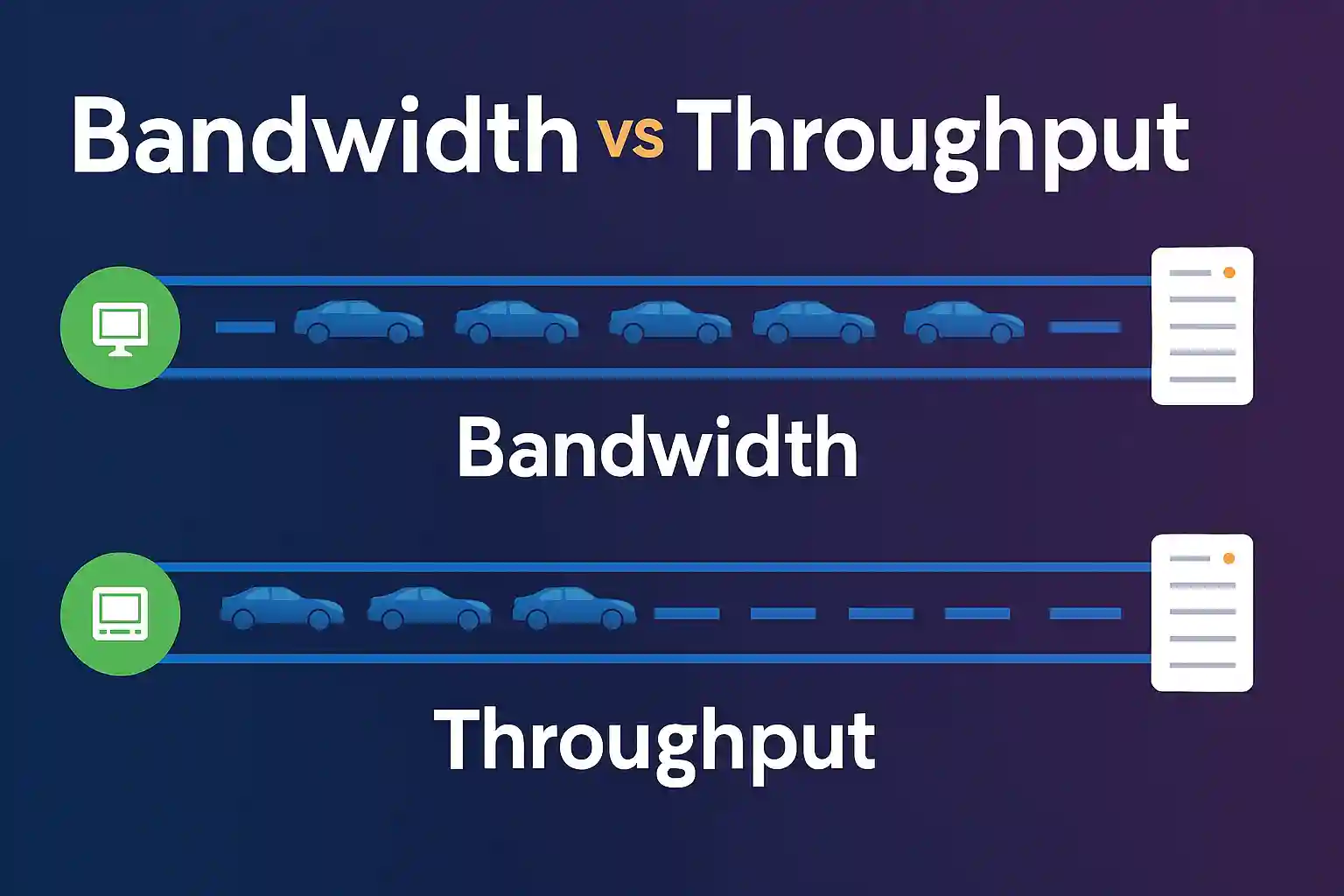

Bandwidth and throughput are related concepts but different from each other. Bandwidth is like a pipe; the larger the pipe, the more water can flow through it. Throughput is the amount of water that flows through the pipe.

To clarify, here’s a detailed comparison:

| Aspect | Bandwidth | Throughput |

| Definition | Maximum data transfer capacity | Actual data transferred |

| Measurement | bps, Mbps, Gbps | bps, Mbps, Gbps |

| Nature | Theoretical maximum | Real-world performance |

| Example | 100 Mbps internet plan | 70 Mbps achieved during a download |

| Factors Affected By | Physical infrastructure, ISP plan | Latency, packet loss, congestion |

The highway analogy remains effective: Bandwidth is like a highway; the more lanes the highway has, the more vehicles can travel on it. Throughput is the number of vehicles that actually make it to the destination. However, real-world factors like traffic jams (congestion) or roadblocks (latency) reduce throughput below the highway’s capacity.

Factors Affecting Throughput

Throughput is always less than or equal to bandwidth due to some factors like latency, packet loss, network congestion, and device limitations.

Several factors impact throughput, limiting the actual data transfer rate:

- Latency: The time it takes for data to travel from source to destination. High latency (e.g., in satellite internet) reduces throughput, even with high bandwidth. For example, 5G networks achieve low latency (~1–10 ms), boosting throughput for real-time applications.

- Packet Loss: When data packets are dropped due to network errors, throughput decreases. This is common in Wi-Fi networks with interference.

- Network Congestion: During peak hours, multiple devices sharing bandwidth (e.g., in a household or office) lower throughput. For instance, a 100 Mbps connection split among 10 devices may yield ~10 Mbps per device.

- Device Limitations: Outdated routers, low-quality cables (e.g., Cat5 vs. Cat6 Ethernet), or software overhead can bottleneck throughput.

- Protocol Overhead: Protocols like TCP/IP add headers to data packets, reducing effective throughput. For example, a 100 Mbps link may lose 5–10% capacity to overhead.

Mathematical Perspective

Throughput can be approximated as:

[ text{Throughput} = text{Bandwidth} times (1 – text{Packet Loss Rate}) div (text{Latency Factor}) ]

Where:

- Packet Loss Rate is the percentage of lost packets (e.g., 2% = 0.02).

- Latency Factor accounts for delays (e.g., higher latency reduces the factor).

For example, a 100 Mbps connection with 2% packet loss and moderate latency might yield:

[ text{Throughput} = 100 times (1 – 0.02) div 1.1 approx 89 text{ Mbps} ]

Measuring Bandwidth and Throughput

To optimize network performance, you need to measure both bandwidth and throughput:

- Bandwidth: Check your ISP plan or router specifications for theoretical capacity. Tools like Speedtest by Ookla display available bandwidth.

- Throughput: Use tools to measure actual performance:

- iPerf: A command-line tool for testing throughput between two devices.

- Netperf: Measures throughput in enterprise environments.

- PingPlotter: Tracks latency and packet loss affecting throughput.

For example, running iPerf on a 1 Gbps LAN might show 950 Mbps throughput due to minor packet loss, confirming efficient performance.

Real-World Applications

Understanding bandwidth and throughput is critical in various scenarios:

- Enterprise Networks: Companies use high-band width fiber connections (e.g., 10 Gbps) to ensure sufficient throughput for cloud backups or AI workloads.

- IoT Devices: Smart home devices (e.g., security cameras) require consistent throughput (~1–5 Mbps) to stream data without buffering.

- Gaming and Streaming: High throughput ensures smooth gameplay or 4K streaming, while low latency prevents lag.

- 5G and Edge Computing: 5G’s high band width supports throughput-intensive applications like remote surgeries or autonomous drones.

How to Optimize Throughput

To maximize throughput and approach your band width capacity:

- Upgrade Hardware: Use modern routers (e.g., Wi-Fi 6) and high-quality cables (e.g., Cat6 or fiber).

- Reduce Congestion: Limit connected devices or prioritize traffic using Quality of Service (QoS) settings.

- Minimize Latency: Choose low-latency connections (e.g., fiber over satellite) and optimize routing paths.

- Monitor Packet Loss: Use tools like PingPlotter to identify and fix network errors.

- Update Firmware/Software: Ensure routers and devices run the latest firmware to avoid bottlenecks.

Conclusion

Bandwidth and throughput are fundamental to networking, but they serve distinct roles. It sets the theoretical limit, while throughput reflects real-world performance shaped by latency, congestion, and other factors. By understanding their differences and optimizing your network, you can achieve better performance for streaming, gaming, enterprise applications, or IoT deployments. Ready to test your knowledge? Take our Bandwidth vs Throughput Quiz to deepen your understanding and apply these concepts to real-world scenarios!

For photography and camera knowledge, visit shotecamera.com

FAQs

What is bandwidth?

Bandwidth refers to the maximum amount of data that can be transmitted over a network in a given time. It is measured in bits per second (bps) and represents the network’s capacity.

What is throughput?

Throughput is the actual amount of data successfully transmitted over a network in a given period. It can be lower than bandwidth due to interference, congestion, or hardware limitations.

How do bandwidth and throughput differ?

Bandwidth is the theoretical maximum data transfer rate, while throughput is the actual performance achieved. Factors like network congestion and latency affect throughput.

Why is bandwidth important for network performance?

Bandwidth determines how much data can travel at once, directly impacting loading speeds, streaming quality, and overall network responsiveness.

How can bandwidth and throughput be optimized?

Improving network hardware, reducing congestion, managing traffic flow, and optimizing configurations help maximize both bandwidth and throughput for efficiency.