CCNA

Cisco Certified Network Associate certification resources

Classful vs Classless Addressing Definitive Guide 2025 – From Confusion to Confidence in IP Addressing

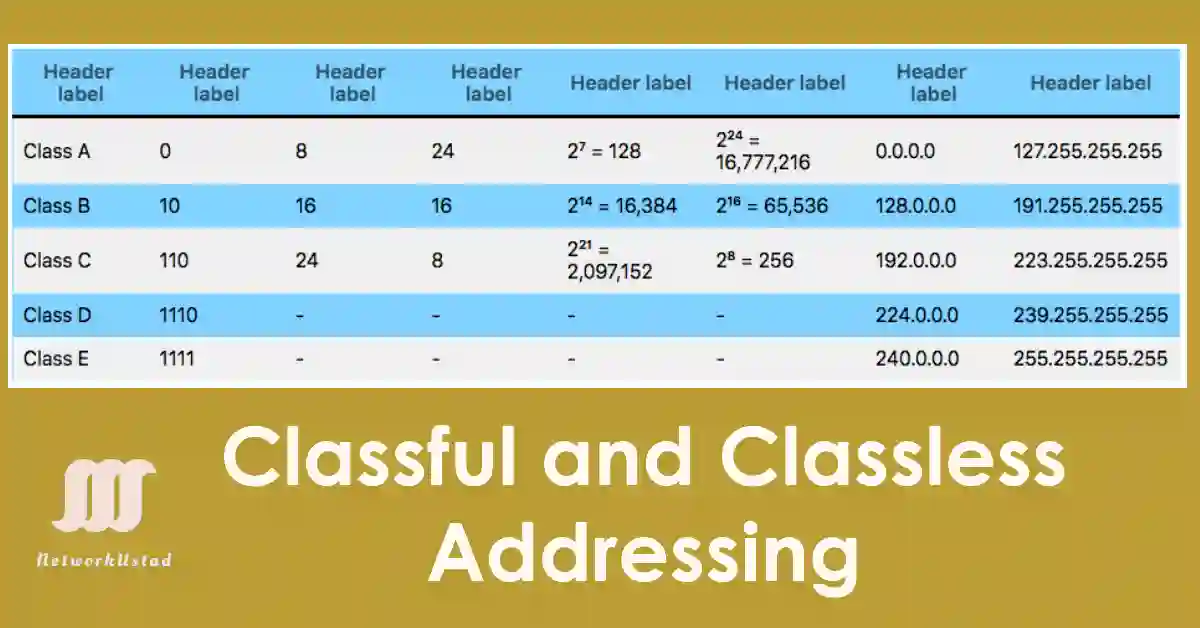

Classful addressing emerged in the early Internet (1980s) with fixed Class A, B, and C ranges, leading to IP address exhaustion. The introduction of CIDR in 1993 marked the shift to classless addressing, allowing flexible prefixes (e.g., /20) and supporting the IPv4-to-IPv6 transition Classful and Classless addressing are terms describing IP address structure, with classless...

Collision Domains and Broadcast Domains: A Complete Guide 2025

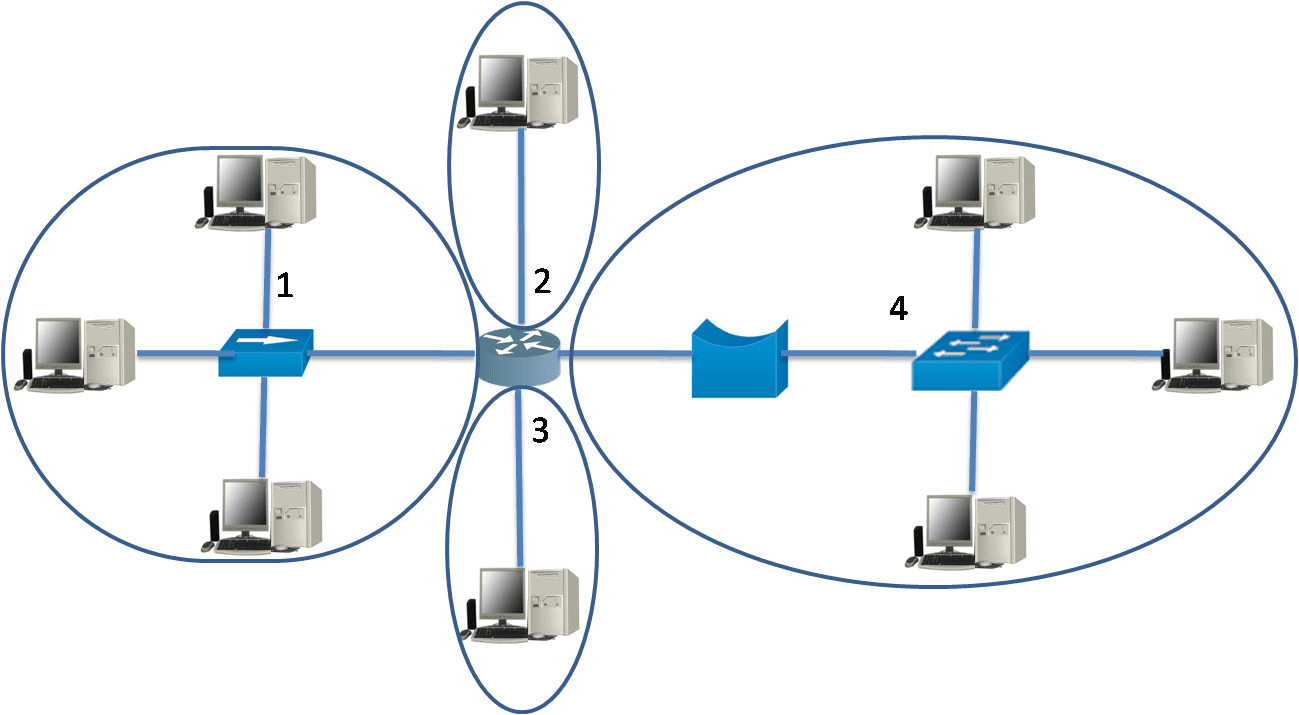

In the realm of networking, understanding collision domains and broadcast domains is fundamental for designing efficient and scalable networks, a critical skill for CCNA and CCNP certifications. A collision domain represents a network segment where data packets may collide if multiple devices transmit simultaneously, a common challenge in older Ethernet setups like those using hubs....

Subnetting Unveiled: Master the Art of Network Segmentation 2025

Subnetting allows a network administrator to create a smaller network known as sub-networks or subnets inside a large network by borrowing bits from the Host ID portion of the address. It implements and manages a practical IP addressing plan by partitioning a single physical network into more than one smaller logical sub-network (subnets), enhancing control...

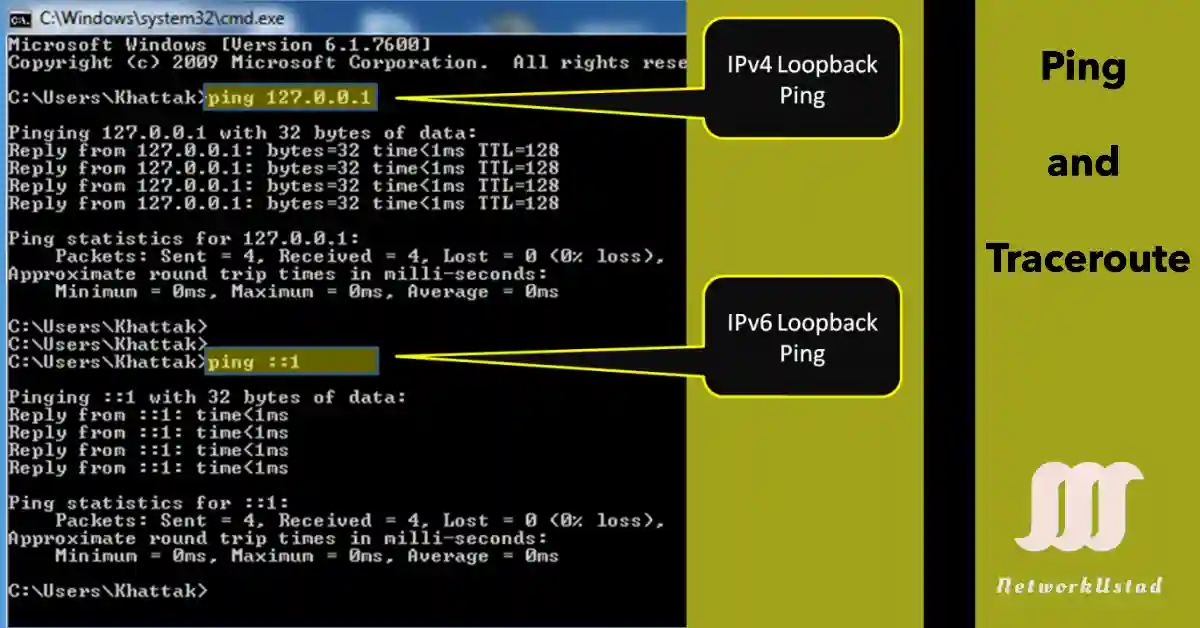

Importance of Ping and Traceroute – Exclusive Guide 2025

Ping and traceroute are foundational tools for network troubleshooting, widely utilized in CCNA and CCNP certifications. These utilities leverage ICMP (Internet Control Message Protocol) to diagnose connectivity, latency, and routing issues, making them indispensable in both lab environments and real-world network management. For CCNA students, mastering ping helps verify host reachability, while CCNP students can...

ICMPv6 NS and RA Messages: Boost Your CCNA Skills With This Details Guide and Interactive Simulator!

The Internet Control Message Protocol (ICMP) is a critical component of the IP suite, used for error reporting, diagnostics, and network management. While ICMPv4 supports IPv4 networks, ICMPv6 is its enhanced counterpart for IPv6, introducing new features like the Neighbor Discovery Protocol (NDP). For CCNA and CCNP students, understanding ICMP is essential for configuring and...

Internet Control Messaging Protocol (ICMP)- The Ultimate Guide for Network Success with ICMPv4 Debug Simulator in 2025

The Internet Control Messaging Protocol (ICMP) is a critical network layer protocol within the TCP/IP suite, enabling error reporting and diagnostics for IPv4 and IPv6. An Internet Protocol (IP) is unreliable because it does not provide messages to be sent in the event of specific errors. The Internet Control Message Protocol (ICMP) services send messages...

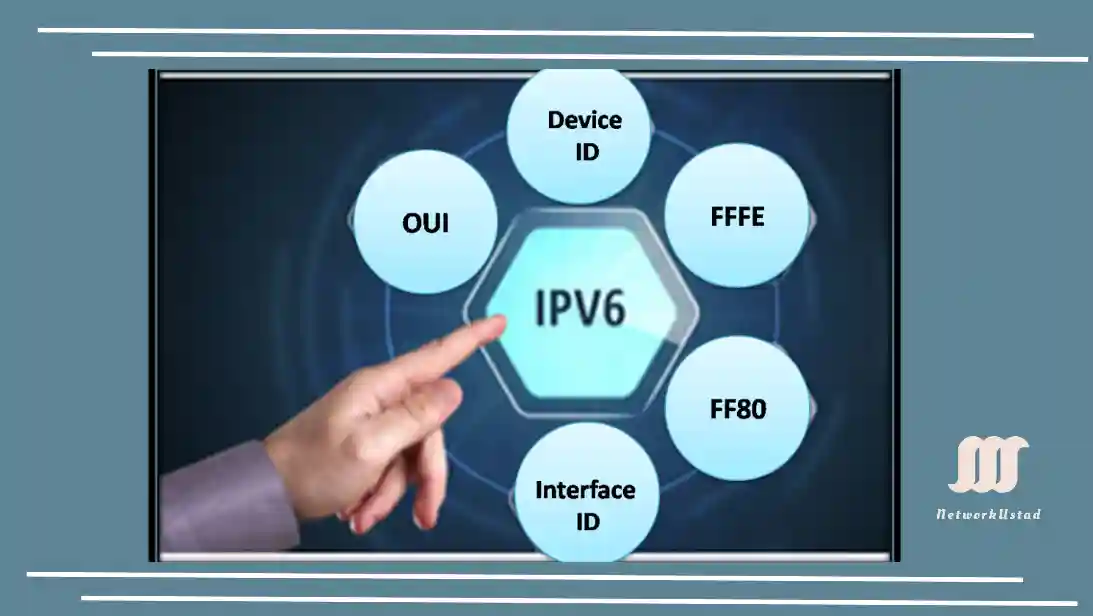

EUI-64 Process and Randomly Generated IPv6- Easy to understand Guide

After a client receives a Stateless Address Autoconfiguration (SLAAC) message, often via a stateless Router Advertisement (RA), it must generate its Interface ID. Unlike stateful DHCPv6, SLAAC provides the prefix portion (typically /64) from the RA, while the client autonomously creates the 64-bit Interface ID. This ID can be derived from the MAC address using...

Unicast, Multicast and Broadcast Communication

By the end of 2025, global internet traffic is projected to exceed 181 zettabytes, driven by video streaming, IoT devices, and 5G connectivity. Efficient data transmission methods like unicast, multicast, and broadcast are critical to managing this surge. This article breaks down these three communication types, their technical workings, modern applications, and emerging trends shaping networks today. This article,...

Mastering Host Address, Network Prefix, Network ID, and Broadcast ID in 2025

This article, “Mastering Prefix, Network ID, Broadcast ID, and Host Address” is the continuation of my previous articles about the IP address, which are the following: IP address Classes- Exclusive Explanation Positional Number System and Examples (Updated 2025) Network and Host Portion of IPv4 Address So, you need to study the above article to understand...

Master Network and Host Portion of IPv4: Ignite Your Networking Skills Today!

Each network requires a unique network number, and each host requires a unique IP address. The IPv4 address is a 32-bit number that uniquely identifies a network and host. In 2025, mastering IPv4 addressing is crucial for CCNA preparation and managing networks with 5G, IoT, and high-speed 100G/400G/800G interfaces, where efficient IP allocation remains vital....